The delayed Henon map,

offers insight into the high-dimensional dynamics through its adjustable d parameter. In the previous post, Delayed Henon Map Sensitivities, we looked at the sensitivity of the output to perturbations in each of the time lags of this map using partial derivatives. Since this function is known, it is simple to determine the lag space as well as the embedding dimension for the system. However, as we will see later, we can use this method to find the lag space and embedding dimension for unknown systems using artificial neural networks. Some interesting questions arise when you analyze neural networks trained on the delayed Henon map and its inverted counterpart so we will first look at the inverted delayed Henon map.

The delayed henon map can be inverted quite easily by separating the time delayed form into two equations such as the following,

After a little algebra, you get

Finally, we replace with

to obtain the inverted map,

After inversion, the map becomes a repellor and any initial condition on the original attractor will wander off to infinity. Since we cannot directly estimate the sensitivities from this function we can calculate a time series from the righted delayed Henon map and feed that data into the partial derivative equations of the inverted delayed Henon map. Using this process on 10,000 points from the righted delayed Henon map (the first 1,000 points removed) we obtain the following sensitivities,

Here are a few other maps, their sensitivities, their inverted equations, and those sensitivities:

Original Henon map [1]

Equation

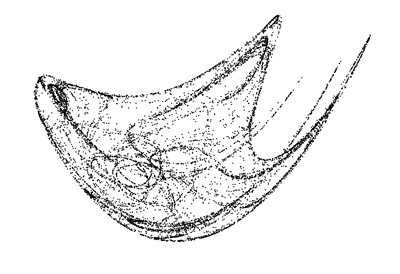

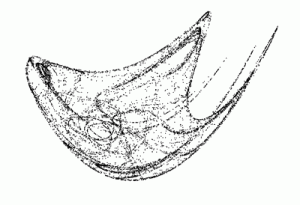

Sensitivities

Inverted Equation

Inverted Sensitivities

Discrete map from preface of Ref. #2

Equation

Sensitivities

Inverted Equation

Inverted Sensitivities

References:

- Henon M. A two-dimensional mapping with a strange attractor. Commun Math Phys 1976;50:69–77.

- Sprott JC. Chaos and time-series analysis. New York: Oxford; 2003.

This post is part of a series:

- Delayed Henon Map Sensitivities

- Inverted Delayed Henon Map

- Modeling Sensitivity using Neural Networks